Lessons From A Chatbot Incident

written by Jeremiah Fowler || Cybersecurity Researcher

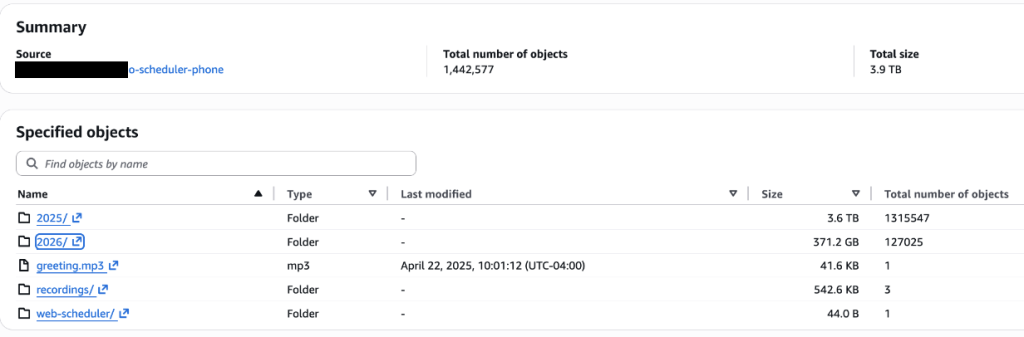

When AI Chatbots Become a Data Liability

The adoption of AI chatbots across industries has transformed customer service, scheduling, and operational workflows, but it has also introduced a new and often overlooked risk of exposing customer data. Recently, I discovered three publicly accessible databases containing approximately 3.7 million records belonging to Sears Home Services, the beloved retailer founded in 1892. These files consisted of chat transcripts, audio recordings, and text transcriptions of customer interactions which included Personally Identifiable Information (PII) such as names, addresses, emails, and phone numbers, along with details about products and services.

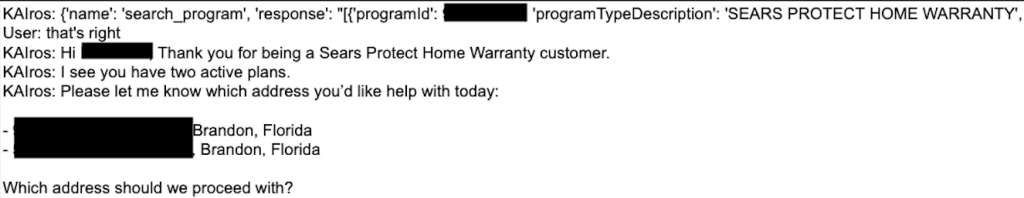

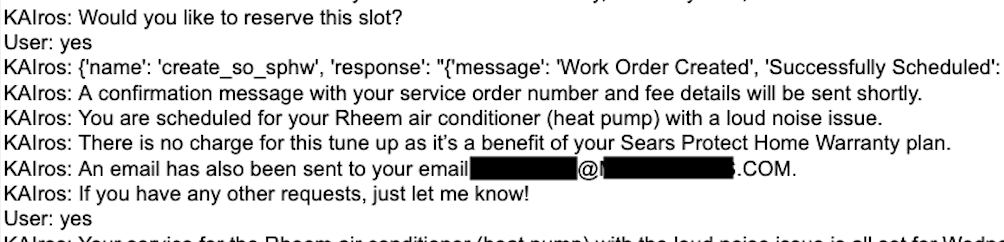

The databases have since been secured, but the incident highlights a critical issue for businesses that think AI chatbots are a silver bullet or a turnkey replacement for humans. AI bots are not just operational tools, they are effectively data collection systems that can become significant liabilities if improperly managed or data storage is misconfigured. In the following screenshot, we see an example of a customer address (redacted) being transcribed from a service call into the database which was unprotected and unencrypted.

AI-driven assistants can aggregate numerous data types into a single ecosystem. Datasets that contain detailed logs, metadata, and voice recordings can be used by attackers for identity reconstruction, targeted social engineering, or even biometric misuse. There is also a growing risk of biometric voice data being used to synthesize realistic voice clones for social engineering and other forms of fraud.

In addition to exposing user or customer PII, chatbot systems can also reveal internal logic, prompts, and other proprietary details.

Incident Insights

My report was covered by WIRED and multiple other media outlets, but I wanted to summarize the findings here for the BHIS community with a security minded perspective to prepare for the future of AI risks. An important takeaway from this discovery is that these files were not exposed by a sophisticated cyberattack but from a basic security failure. In this case, the databases were neither password protected nor encrypted, making them accessible to anyone with a web browser.

Human error is still a serious issue in the world of data protection and security. The chances of a data incident only increase when third-party vendors are involved in developing or managing AI systems. This is why data governance and oversight should be a core part of your business. Even if a contractor or vendor has a breach at the end of the day, this is still your data or the data of your customers.

By now, we all know (or should know) the risks of improper AI data management, such as not encrypting files that contain sensitive information. Far too often I see plaintext data exposed, but when I find files that are encrypted, I move on because the files are unreadable, and I don’t have a supercomputer (yet). It is a good idea to follow a zero-trust model where access is explicitly granted, continuously verified, and need-based. Data minimization and giving data a lifespan can also mitigate risks, since reducing the volume of stored data can reduce the potential impact of any breach.

Organizations must now consider and plan for emerging AI specific risks, especially when it comes to system logic, system prompts, guardrails, or internal decision-making processes that could be vulnerable to misuse or reverse engineering. For anyone reading this, it should already be clear how important continuous monitoring, scans for exposed assets, and regular security testing are to your business or industry. Security teams clearly explaining these threats and risks to decision makers in an organization is critical to get the funding and investments into cybersecurity to identify vulnerabilities before they can be exploited. I always make the joke that no one has a budget for cybersecurity until they do, and it’s usually after a data incident.

We now face the reality that the rise of AI chatbots, virtual assistants, and other AI tools will require a fundamental shift in how we think about the data that AI processes, collects, and stores. AI chatbots are not just a benign interface where inputs go off into space, never to be seen again. They are now a part of your data infrastructure that captures, processes, and stores valuable information that could potentially be exploited. One mistake can expose millions of records and create significant risks. We must recognize the benefits that AI technologies provide without ignoring the security risks they present.

Ready to learn more?

Level up your skills with affordable classes from Antisyphon!

Available live/virtual and on-demand