Forward into 2023: Browser and O/S Security Features

Joff Thyer //

Introduction

We have already arrived at the end of 2022; wow, that was fast. As with any industry or aspect of life, we find ourselves peering into 2023 and wondering in which direction the information security landscape will evolve. As I contemplated this idea today, I thought I would write down a few introspective thoughts myself.

Firstly, the traditional security perimeter stack has evolved dramatically. We live in a world where the archaic concentric circles model — aka the moat around the castle — has completely dissolved. Anywhere from cloud migration to highly functional apps delivered directly in the browser, to end-user enabled shadow IT, zero trust security models, and so on has resulted in a complete rethinking of boundaries, along with a considerable amount of anxiety of behalf of security professionals. Just where is our data? How well is it protected? Are the dev-ops engineers trained well enough to keep security front of mind in an agile delivery world? Do all these cloud providers give us the right tools to secure our organization?

We must all adapt to these challenges through change. Holding onto an illusion of control through the castle and moat model is a fool’s errand. While I could write about the plethora of information security implications that this rapidly evolving model has introduced, I wish to focus for now on the commonly used Windows workstation endpoint.

With the endpoint we have seen a dramatic improvement in the deployed security technologies that protect the operating system itself. The endpoint/extended detection and response (EDR/XDR) software capabilities have matured significantly, making both initial compromise and post exploitation activities on the Windows endpoint extraordinarily challenging assuming mature deployment and tuning.

While I think we can all agree this is fabulous news for the industry, we should ask ourselves whether maximizing desktop O/S protections still matters as much as it used to. Otherwise said, we must concede that the web browser is where critical business data is increasingly being managed.

The natural corollary question is whether sufficient security technologies are deployed in the browser. Do these protections rival the O/S deployed protections? Are these protections integrated with the O/S level defensive deployments?

Google Chrome and Chromium Project

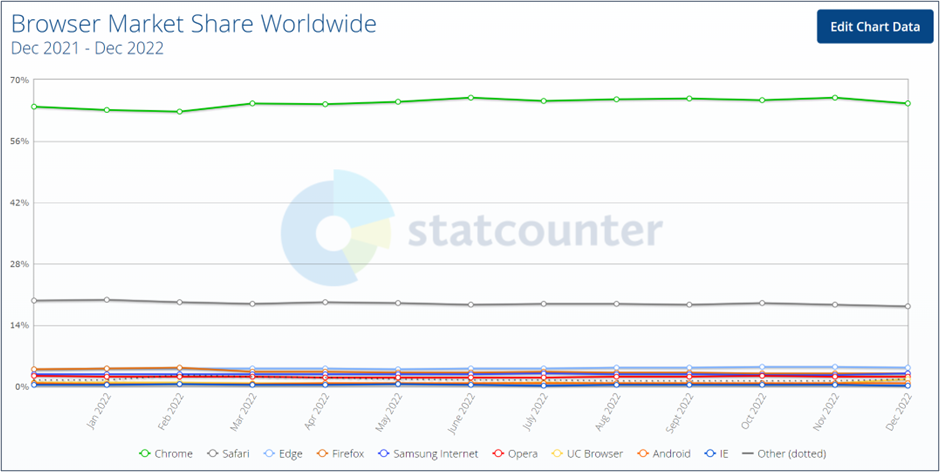

Let’s start with the web browser market share1. Google Chrome clearly leads the pack in adoption. There are additionally browsers based on Google’s Chromium project, such as Opera and Microsoft Edge, which notably holds second place to Chrome. From this data, we can conclude that any security vulnerabilities in the Chromium open-source project will be very significant and that proprietary security-related code in the Chrome and Edge browsers is also critically important.

Given this backdrop, what security features are implemented in Chrome and Chromium that help defend the browser and its user2?

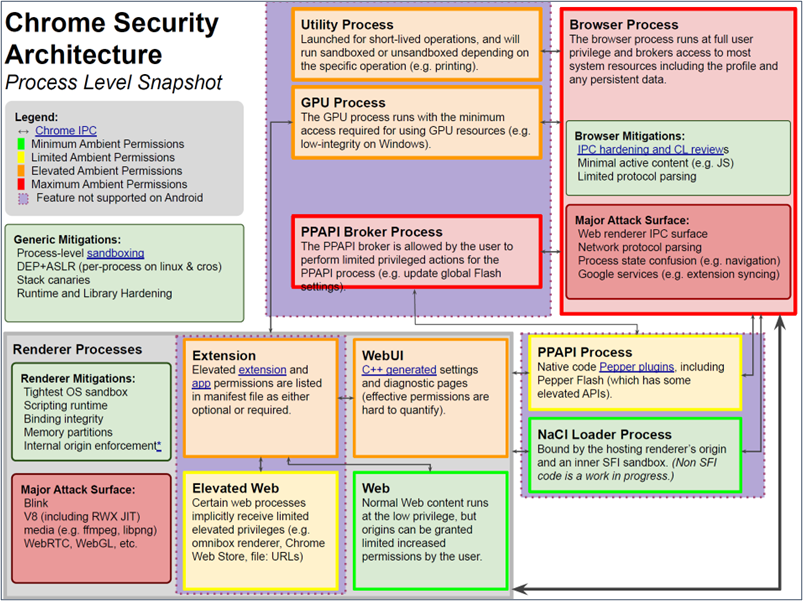

The team at Google have created a security architecture3 diagram which does a pretty good job of identifying the attack surfaces from a process level perspective below4. The major target areas of concern are the browser process and the renderer processes.

Given the browser architecture landscape and process level concerns above, let’s break down some of the protective technologies that have been authored in response to browser security concerns. Please note that much of the below information has been researched and somewhat paraphrased from Google blogs and design documents available online. There are several key architectural foundations and features in the Chrome/Chromium browser effort that are relevant.

- The Renderer Sandbox

- Site Isolation (Spectre Mitigation)

- The V8 (JavaScript) Sandbox (Heap Exploit Mitigations)

- Improving Memory Safety

- User facing security controls

Renderer Sandbox

The Chromium project renderer sandbox5 adheres to sound foundational security principles. As earlier stated, I will keep this discussion in the context of the Windows desktop. These principles include:

- Not reinventing the wheel. Let the operating system apply its security to the objects it controls.

- Principle of least privilege applied both to the sandboxed code and the code that runs the sandbox itself.

- Always assume the sandboxed code is malicious.

- Minimize performance impact.

- No use of emulation, code translation, or patching to provide security.

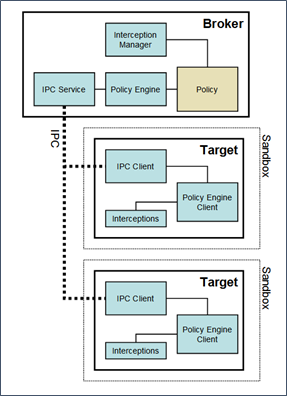

The Windows architecture is a user mode implementation. There are no supporting kernel drivers to date. The sandbox architecture breaks down into two processes: a broker process and a target process. The broker is the supervisor of the target processes doing the actual work.

Broker Process

Broker process responsibilities are to:

- Specify a policy for each target process. Sandbox target interceptions are evaluated against this policy when received (see below)

- Spawn the target processes

- Host the sandbox policy engine and interception manager

- Host the sandbox IPC service for target processes

- Perform policy-allowed actions on behalf of target processes

Target Process

The target processes are the actual renderers themselves. Responsibilities include:

- Host the code to be sandboxed

- Run the sandbox IPC client for messaging

- Be the sandbox policy engine client

- Perform sandbox interceptions. Note that interceptions are how Windows API calls are forwarded via the sandbox IPC to the broker

The Sandbox Restrictions

The renderer sandbox depends on the protections provided by the Windows operating system which include the use of:

- A highly restricted security token which implements group restrictions, has no specific privileges, and uses the “Untrusted integrity level.” The Untrusted integrity level can only write to resources which have a null DACL or an explicit Mandatory Integrity Control (MIC) level of Untrusted.

- A Windows job object for target processes which implements numerous restrictions including:

- Prohibits per-use system wide changes

- Prohibits creating or switching Windows desktops

- Prohibits changes to display settings

- Prohibits clipboard access

- Prohibits Windows message broadcasts

- Prohibits setting global hooks via the SetWindowsHookEx() API call.

- Prohibits access to the atom table

- Prohibits access to user handles outside of the job object

- Limits to one active process (no child process creation allowed)

- The Windows desktop object: The sandbox creates an additional desktop that is associated to all target processes. This desktop is never visible or interactive and effectively isolates the sandboxed processes from snooping the user’s interaction and from sending messages to windows that are operating at more privileged contexts.

Site Isolation

Site isolation was an effort to completely re-architecture the browser security model to align it more closely with operating system process security boundaries6,7. The site isolation project was a response to both act as a second line of defense for successful attacks against the rendering engine and to mitigate CPU speculative execution memory leakage attacks (Spectre8). For transient execution attacks, mitigation strategies were considered including:

- The removal of precise timers, which was harmful to the operational aspect of the platform

- Implementation of compiler/runtime scanning mitigations, which were deemed impractical

- Keeping data isolated/out of reach, which is ultimately what site isolation achieves

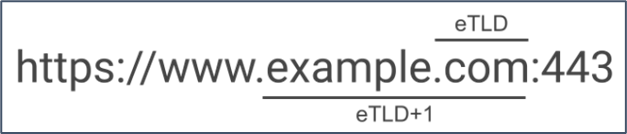

The “Site” concept is not as granular as the “Origin” concept. An Origin consists of the protocol scheme, the host name, and port. A Site on the other hand is defined by the effective top-level domain (eTLD), plus the domain portion just before it, also known as eTLD+1. Note: since there is no algorithmic method for determining the domain suffixes in an eTLD, a public suffix list is now maintained (https://github.com/publicsuffix/list).

Origin Example

Site Example 1

Site Example 2

The V8 (JavaScript/WASM) Sandbox

Since mid-2020, Google’s open-source JavaScript/Web Assembly engine (V8) has implemented a pointer compression for heap management9. This means that every reference from an object in the V8 heap to another object in the V8 heap becomes a 32-bit offset from the base of the heap.

Compressed pointers are thus valid only within a 4GB memory range, which is called a pointer compression cage. The majority of vulnerabilities which can be exploited to corrupt memory will thus only be able to corrupt within this compression cage.

There exist a few objects with raw (absolute) pointers to objects outside of the heap (off-heap). An attacker can target these few objects (typically an Array Buffer or typed Array backing store pointer) to corrupt memory outside of the heap which is, of course, sub-optimal and can result in code execution.

The V8 Sandbox project objective is to protect these remaining few objects in a way that also prevents attacker abuse. In summary, this design achieves the goal as follows:

- A large 1TB region of memory (the sandbox) is reserved during initialization. This region of memory contains the pointer compression cage as well as storage for the array backing stores and other objects.

- All objects inside this sandbox, but outside of V8 heaps, are addressed using 40-bit fixed size offsets instead of raw pointers.

- Any remaining “off-heap” objects must be referenced through an external pointer table which contains the actual pointer and object type information to additionally help defend against type-confusion style attacks.

Improving Memory Safety

During 2020, the Chromium project studied and published that over 70% of their high severity security defects were memory-safety related, with over half of these being of the “use after free” variety. Quite specifically this translates into mistakes with pointers in the C/C++ languages, which cause memory to be misinterpreted. It almost goes without saying that this one issue is a plague that has affected the software industry in general for decades.

Chrome has been exploring avenues to address this, including:

- Making C/C++ safer via compile time checks on pointer correctness

- Making C/C++ safer through runtime checks on pointer correctness

- Investigating the use of a memory safe language for parts of the Chromium codebase

In terms of C/C++ safety, the project has explored the use of “Miracle Pointers”10 which is really just a term for a class of algorithms that wrap pointer use in templated memory safe C/C++ classes. The object of these implementations is to eliminate the largest “use after free” type of vulnerabilities.

In terms of a memory safe language implementation, the team has been exploring Rust as a potential alternative for parts of the codebase.

User-Facing Security Controls

Chrome ships with a number of user facing security features that can help mitigate the risk of an initial browser exploitation attempt. These include:

- Safe Browsing: a block list that warns you about potentially malicious sites.

- Predictive Phishing Protection: scans pages to see if they match known fake or malicious sites.

- Incognito Mode: also known as private browsing mode. All browsing history and cookies will be deleted at the end of an incognito mode session. The browser will also not remember any information entered into forms or permissions granted to websites.

- Safety Check: a user driven “security checkup” audit on the browser. Safety check runs through a series of basic checks for you such as:

- Is the Chrome software fully up to date?

- Have any of your saved passwords been compromised in public breaches?

- Is the Safe Browsing feature enabled?

- Do you have any harmful extensions enabled?

- Is there any known harmful software that you have downloaded?

- Automatic Updates: Chrome will notify if a software update is available.

Chrome Extensions

Chrome extensions are used to add additional features and functionality to the browser11. Extensions are authored with the same technologies that websites use, these being:

- HTML for content markup

- CSS for content styling

- JavaScript for scripting logic

There is an additional emerging technology that extensions can leverage called WebAssembly (Wasm). WebAssembly is a binary instruction format for a stack-based virtual machine that can be executed in the same context of the JavaScript engine in the browser. Google’s V8 implementation is in fact both a WebAssembly and JavaScript engine.

WebAssembly aims to operate at near native performance levels by taking advantage of hardware capabilities of the target platform. Because it is a binary instruction format, different high-level languages can be compiled to WebAssembly assuming a relevant compiler has been authored.

Extensions can use all the JavaScript APIs that the browser provides, as well as gain access to Chrome APIs. This additional level of access to the Chrome APIs allows things like:

- Changing the functionality or behavior of a website

- Collecting information across different websites

- Adding features to Chrome development tools

Extensions can be broken down into two components: a content presentation component and a service worker component. The service worker operates as a background task of sorts and can interact with the presentation component through messaging or browser local storage. A service worker is event driven and can use all the Chrome APIs but cannot interact directly with web content.

Chrome extensions are officially published in the Chrome web store. When installed, they allow the developer to request (via the MANIFEST) a great deal of power and control over your web browser. Things to consider about extensions are:

- A Chrome extension can read and modify web page content.

- A Chrome extension can access cookies, browser history, and browser data storage.

- A Chrome extension can transmit and receive any data across the network.

- A Chrome extension can exert control over other Chrome extensions.

- Chrome extensions in the web store are not guaranteed to be malware free.

- When you install an extension, you are extending a lot of trust to the developer of that extension.

Chrome Command Line Switches

We are probably all used to just clicking on the Chrome icon and assuming that Chrome will start up normally, trusting that all is in order as it should. Having said this, there are several command line switches12 that can be used to change the behavior of Chrome upon startup. Some of these switches specifically disable or weaken security features of the browser, usually for testing/development purposes.

I have identified the following list of switches which I have either already used experimentally, read about in malware reports, or which I suspect may present a security concern. It is not uncommon for malware to kill the Chrome process and then restart Chrome, adding in some command line switches to change the behavior of the browser, such as loading a malicious extension for example.

- –allow-legacy-extension-manifests

- allows the browser to load extensions that lack a modern manifest and would otherwise be forbidden.

- –allow-no-sandbox-job

- allows the sandboxed processes to run without a Windows job object assigned to them. This has the effect of broadening access to available Windows APIs that would not normally be available.

- –allow-unsecure-dlls

- Won’t allow EnableSecureDllLoading() to run when this is set. (Probably allows you to load any unsigned DLL.)

- –disable-breakpad

- Disables crash reporting.

- –disable-crash-reporter

- Disables crash reporting in headless mode.

- –disable-extensions-http-throttling

- Disables the net::URLRequestThrottleManager() functionality for HTTP(s) requests originating from extensions.

- –disable-web-security

- Does not enforce same site origin policy.

- –hide-crash-restore-bubble

- Disables showing the browser crash/restore bubble.

- –load-extension

- Loads an extension from disk on startup.

- –load-empty-dll

- Loads the file “empty-dll.dll” whenever this flag is set.

- –proxy-server

- Uses the specified proxy server overriding the system proxy settings.

- –no-sandbox

- Disables the renderer sandbox completely.

- –restore-last-session

- Restores last browsing session after crash/exit.

- –single-process

- Runs the renderer and plugins in the same process as the browser.

The ChromeLoader13,14 malware is an example of using PowerShell to kill the Chrome process and then restart, loading the extension that has been dropped.

Concluding Thoughts

It is very clear that Google has taken the attacks on the renderer and JavaScript engine, as well as the threat posed by speculative execution memory leakage, very seriously. As such they have implemented a robust defensive architecture that is a best effort to mitigate the risks posed.

Having said this, the Chrome browser endpoint is a very complex software architecture. Having a dynamic compilation environment (Just-In-Time Compiler) in the areas of JavaScript — and now WebAssembly — will always suffer the potential of a logic flaw in which an attacker can force the compiler/assembler engines to emit malicious code. Continued work on heap protections in the V8 JavaScript/WebAssembly space acts as a line of defense here.

It is likely that memory use-after-free defects will continue to be found unless the entire architecture is re-written in a memory safe language, which would frankly be a mammoth effort. I applaud the efforts to work around the edges, chipping away at the problem by proposing rewriting to a memory safe language for exposed components where it makes most sense.

I also think we are likely to see more interest from the Chrome/Chromium team in the areas of Control Flow Guard15 and Control-flow Enforcement Technologies16 which further aligns the browser not only with the Windows operating system security defenses, but also with CPU hardware.

Lastly, as with much of the past decade in information security, you cannot predict what an end user will do. If the end user downloads malware that in turn drops a malicious extension and script to silently restart the browser, the power granted by the extension over the browser exposes a significant attack surface. I question whether there is a legitimate operational use case for many of these command line switches to exist in the release version of Chrome and would suggest perhaps that the release version eliminate much of this capability to further reduce the attack surface.

Happy New Year, and Happy Safer Browsing in 2023.

References

[1] https://gs.statcounter.com/browser-market-share

[2] https://security.googleblog.com/2022/03/

[3] http://seclab.stanford.edu/websec/chromium/chromium-security-architecture.pdf

[4] https://www.chromium.org/Home/chromium-security/guts/

[5] https://chromium.googlesource.com/chromium/src/+/master/docs/design/sandbox.md

[6] https://www.usenix.org/conference/usenixsecurity19/presentation/reis

[7] https://www.chromium.org/developers/design-documents/site-isolation/

[8] https://spectreattack.com/spectre.pdf

[9] https://docs.google.com/document/d/1FM4fQmIhEqPG8uGp5o9A-mnPB5BOeScZYpkHjo0KKA8/edit

[10] https://chromium.googlesource.com/chromium/src/+/ddc017f9569973a731a574be4199d8400616f5a5/base/memory/raw_ptr.md

[11] https://developer.chrome.com/docs/extensions/

[12] https://peter.sh/experiments/chromium-command-line-switches/

[13] https://unit42.paloaltonetworks.com/chromeloader-malware/

[14] https://blogs.vmware.com/security/2022/09/the-evolution-of-the-chromeloader-malware.html

[15] https://learn.microsoft.com/en-us/windows/win32/secbp/control-flow-guard

[16] https://www.intel.com/content/www/us/en/developer/articles/technical/technical-look-control-flow-enforcement-technology.html

You can learn more straight from Joff himself with his classes:

Regular Expressions, Your New Lifestyle

Enterprise Attacker Emulation and C2 Implant Development

Available live/virtual and on-demand!